For centuries, humans have wondered what animals are trying to tell us — the songs of whales, the chatter of dolphins, the intricate calls of birds. Now, in 2025, technology is finally bringing us closer to understanding them.

Thanks to breakthroughs in artificial intelligence, bioacoustics, and machine learning, scientists are beginning to decode animal communication, revealing a hidden world of complexity, emotion, and structure that may change how we perceive life on Earth.

What once seemed the stuff of fantasy—talking to animals—is becoming a legitimate scientific pursuit. And the results so far are astonishing.

Listening to the Language of Nature

Every species communicates in its own way: through sound, vibration, body language, or even chemical signals. Until recently, decoding these messages was nearly impossible because their patterns were too subtle for humans to detect. That changed with the rise of powerful AI models capable of analyzing millions of acoustic recordings at once.

Projects like Earth Species Project, Project CETI (Cetacean Translation Initiative), and Bioacoustic AI are using neural networks to map the structure of animal communication. By training algorithms on thousands of hours of recordings, from whale songs to elephant rumbles, researchers are finding consistent “grammars” in animal sounds, suggesting these species use syntax and context much like human languages.

For example, sperm whale clicks, once thought to be random, follow repeatable patterns that vary by social group, function, and emotion. AI models have identified “codas,” defined as short sequences that may function like words or phrases. Similar findings are emerging from studies of prairie dogs, which appear to use unique “alarm calls” that describe specific predators in detail.

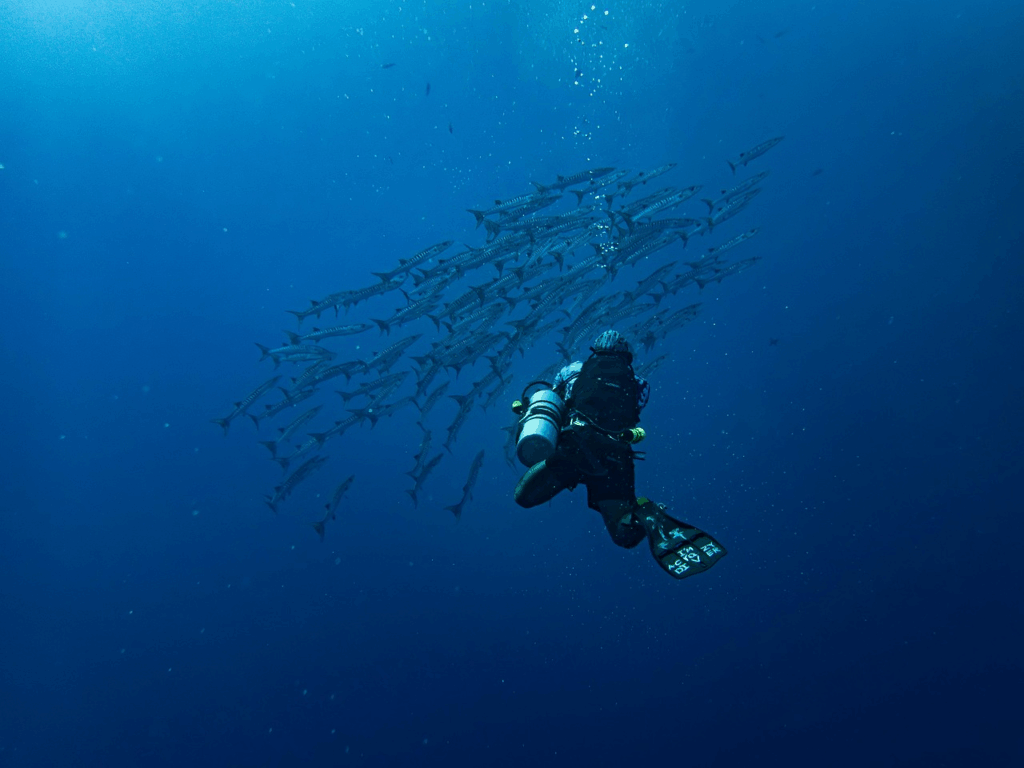

Dive deeper into marine mysteries in The Deep Ocean Frontier: What We’re Still Discovering Below the Waves.

AI as Nature’s Translator

The magic of AI lies in its ability to detect structure in what once seemed chaotic and disorganized. Deep learning models trained on human language have been adapted to analyze animal vocalizations, identifying tone, rhythm, and repetition in non-human communication.

One significant development is cross-species modeling, which uses AI that learns from multiple animals simultaneously to identify universal patterns in how creatures convey information. This could reveal a shared biological foundation for communication across the animal kingdom.

Researchers are already experimenting with interactive playback: sending synthetic whale sounds or bird calls generated by AI back into the wild to observe responses. The goal isn’t to “talk” to animals in the human sense but to understand the context and meaning of their communication, and, eventually, to respond appropriately.

For the tech shaping today’s tools, read How AI Is Changing Everyday Life — Subtly but Completely.

Beyond Sound: The Other Senses of Speech

Not all communication is audible. Bees dance, cuttlefish change colors, and elephants use low-frequency vibrations that travel through the ground for miles. Scientists are combining acoustic sensors, motion tracking, and vibration detectors to capture this multisensory “language.”

In Africa, conservationists use seismic monitors to detect elephant rumbles, which warn of potential poachers or predators. In marine research, AI-equipped drones analyze how whales coordinate hunting or navigation through synchronized movement and sonar.

This multi-channel decoding reveals that animal communication is far more complex and emotional than previously believed, involving social nuance, cooperation, and even empathy.

Why It Matters for Science and Survival

Understanding animal communication could revolutionize conservation. By decoding distress signals or migration calls, scientists can monitor the health of species in real-time without physical interference. It may also improve coexistence between humans and wildlife, reducing conflict through predictive awareness, such as knowing when elephants plan to cross farmland or when whales are migrating near shipping lanes.

The implications go beyond ecology. If intelligence and emotion are more widespread than we thought, it challenges long-held assumptions about human exceptionalism. Learning to interpret animal language could reshape ethics, biodiversity policy, and our understanding of consciousness itself.

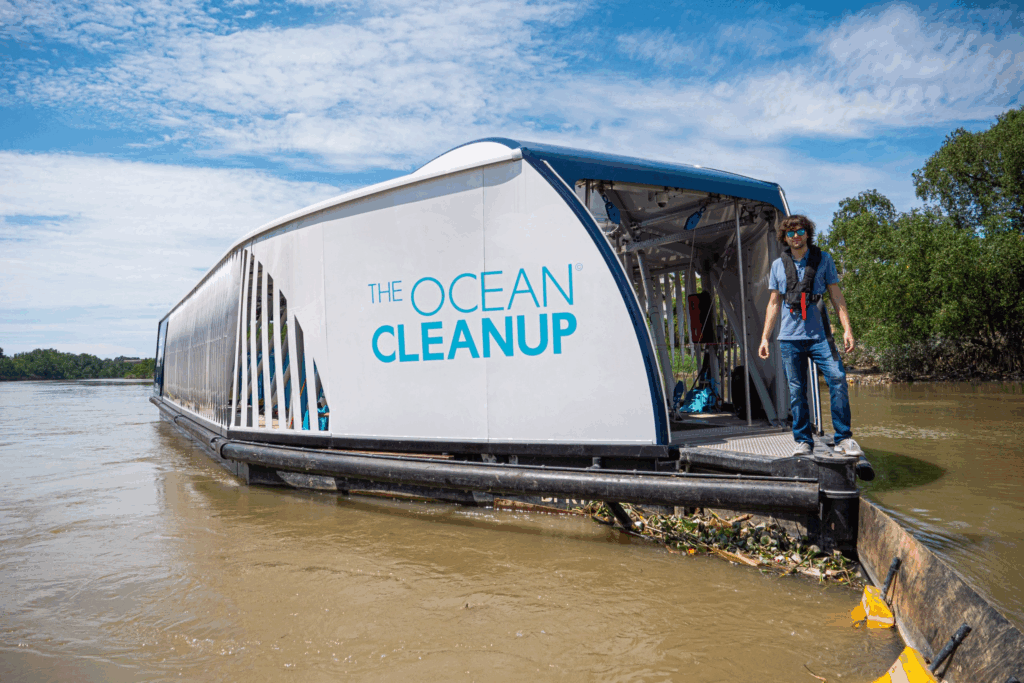

Explore Meet the Robots Cleaning Our Oceans to see real-world ocean cleanup innovations.

The Beginning of a New Conversation

While a universal “animal translator” is still years away, the foundation has been laid. With AI listening more carefully than any human ear could, we are beginning to glimpse a world of communication that’s been happening all along. We didn’t know how to take it in.

In decoding animals, we’re not only learning their languages, we’re rediscovering our own connection to the natural world. The conversation has always been there; we’re finally learning how to listen.